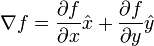

Mathematically speaking, the gradient magnitude, or in other words the norm of the gradient vector, represents the derivative (i.e. the slope) of a 2D signal. This is quite clear in the definition given by Wikipedia:

Here, f is the 2D signal and x^, y^ (this is ugly, I'll note them ux and uy in the following) are respectively unit vectors in the horizontal and vertical direction.

In the context of images, the 2D signal (i.e. the image) is discrete instead of being continuous, hence the derivative is approximated by the difference between the intensity of the current pixel and the intensity of the previous pixel, in the considered direction (actually, there are several ways to approximate the derivative, but let's keep it simple). Hence, we can approximate the gradient by the following quantity:

gradient f (u,v) = [ f(u,v)-f(u-1,v) ] . ux + [ f(u,v)-f(u,v-1) ] . uy

In this case, the gradient magnitude is the following:

|| gradient f (u,v) || = square_root { [ f(u,v)-f(u-1,v) ]² + [ f(u,v)-f(u,v-1) ]² }

To summarize, the gradient magnitude is a measure of the local intensity change at a given point and has not much to do with a radius, nor the width/height of the image.