In an OpenGL ES app I'm working on, I noticed that the glReadPixels() function fails to work across all devices/simulators. To test this, I created a bare-bones sample OpenGL app. I set the background color on an EAGLContext context and tried to read the pixels using glReadPixels() as follows:

int bytesPerPixel = 4;

int bufferSize = _backingWidth * _backingHeight * bytesPerPixel;

void* pixelBuffer = malloc(bufferSize);

glReadPixels(0, 0, _backingWidth, _backingHeight, GL_RGBA, GL_UNSIGNED_BYTE, pixelBuffer);

// pixelBuffer should now have meaningful color/pixel data, but it's null for iOS 7 devices

free(pixelBuffer);

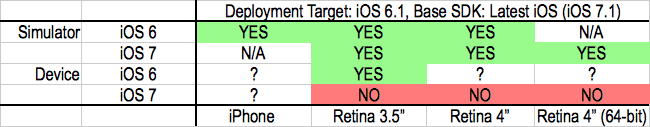

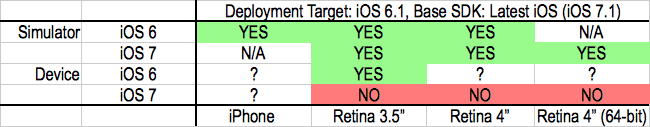

This works as expected on the simulator for iOS 6 and 7 and a physical iOS 6 device, but it fails on a physical iOS 7 device. The scenarios tested are shown in the table below (YES/NO = works/doesn't):

I'm using OpenGL ES v1.1 (though v2 also fails to work after a quick test).

Has anyone encountered this problem? Am I missing something? The strangest part of this is that it only fails on iOS 7 physical devices.

Here is a gist with all the relevant code and the bare-bones GitHub project for reference. I've made it very easy to build and demonstrate the issue.

UPDATE:

Here is the updated gist, and the GitHub project has been updated too. I've updated the sample project so that you can easily view the memory output from glReadPixels.

Also, I have a new observation: When the EAGLContext is layer-backed ([self.context renderbufferStorage:GL_RENDERBUFFER_OES fromDrawable:(CAEAGLLayer*)self.layer]), glReadPixels can successfully read data on all devices/simulators (iOS 6 and 7). However, when you toggle the flag in GLView.m so that the context is not layer-backed ([self.context renderbufferStorage:GL_RENDERBUFFER_OES fromDrawable:nil]), glReadPixels exhibits the condition expressed in the initial post (works on iOS 6 sim/device, iOS 7 sim, but fails on iOS 7 device).